我正在尝试在数据帧列上应用 spaCys 标记生成器来获取包含标记列表的新列。

假设我们有以下数据框:

import pandas as pd

details = {

'Text_id' : [23, 21, 22, 21],

'Text' : ['All roads lead to Rome',

'All work and no play makes Jack a dull buy',

'Any port in a storm',

'Avoid a questioner, for he is also a tattler'],

}

# creating a Dataframe object

example_df = pd.DataFrame(details)

下面的代码旨在标记 Text 列:

import spacy

nlp = spacy.load("en_core_web_sm")

example_df["tokens"] = example_df["Text"].apply(lambda x: nlp.tokenizer(x))

example_df

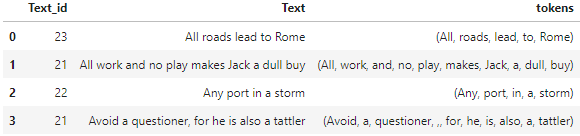

结果如下:

现在,我们有一个新列 tokens,它为每个句子返回 doc 对象。

我们如何更改代码来获取 标记化单词的 python 列表?

我尝试过以下行:

example_df["tokens"] = example_df["Text"].apply(token.text for token in (lambda x: nlp.tokenizer(x)))

但我有以下错误:

TypeError Traceback (most recent call last)

/tmp/ipykernel_33/3712416053.py in <module>

14 nlp = spacy.load("en_core_web_sm")

15

---> 16 example_df["tokens"] = example_df["Text"].apply(token.text for token in (lambda x: nlp.tokenizer(x)))

17

18 example_df

TypeError: 'function' object is not iterable

提前谢谢您!

更新:我有一个解决方案,但我还有另一个问题。我想使用内置类 Counter 来计算单词数,该类将列表作为输入,并且可以使用 update 函数使用其他文档的标记列表进行增量更新。下面的代码应该返回数据框中每个单词出现的次数:

from collections import Counter

# instantiate counter object

counter_df = Counter()

# call update function of the counter object in update the counts

example_df["tokens"].map(counter_df.update)

但是,输出是:

0 None

1 None

2 None

3 None

Name: tokens, dtype: object

预期输出必须类似于:

Counter({'All': 2, 'roads': 1, 'lead': 1, 'to': 1, 'Rome': 1, 'work': 1, 'and': 1, 'no': 1, 'play': 1, 'makes': 1, 'a': 4, 'dull':1, 'buy':1, 'Any':1, 'port':1, 'in': 1, 'storm':1, 'Avoid':1, 'questioner':1, ',':1, 'for':1, 'he':1})

再次感谢您:)

最佳答案

你可以使用

example_df["tokens"] = example_df["Text"].apply(lambda x: [t.text for t in nlp.tokenizer(x)])

查看 Pandas 测试:

import pandas as pd

details = {

'Text_id' : [23, 21, 22, 21],

'Text' : ['All roads lead to Rome',

'All work and no play makes Jack a dull buy',

'Any port in a storm',

'Avoid a questioner, for he is also a tattler'],

}

# creating a Dataframe object

example_df = pd.DataFrame(details)

import spacy

nlp = spacy.load("en_core_web_sm")

example_df["tokens"] = example_df["Text"].apply(lambda x: [t.text for t in nlp.tokenizer(x)])

print(example_df.to_string())

输出:

Text_id Text tokens

0 23 All roads lead to Rome [All, roads, lead, to, Rome]

1 21 All work and no play makes Jack a dull buy [All, work, and, no, play, makes, Jack, a, dull, buy]

2 22 Any port in a storm [Any, port, in, a, storm]

3 21 Avoid a questioner, for he is also a tattler [Avoid, a, questioner, ,, for, he, is, also, a, tattler]

关于python - 如何使用 spaCy 从数据框列创建标记化单词列表?,我们在Stack Overflow上找到一个类似的问题: https://stackoverflow.com/questions/73082256/